Entrepreneurs are actively using AI-powered tools to create images that promote their brands and sell their products. Don’t fall behind, either.

Should we start worrying that artificial intelligence will soon overshadow designers, photographers, and graphics software manufacturers?

It’s about time. That is why we are trying to catch the speeding train into the future. It’s already happening today; neural networks are entering our everyday life, filling a noticeable volume of it.

Businesses are already running advertising campaigns, almost entirely created by artificial intelligence, bloggers are actively using content created in neural networks, designers do not come up with concepts from scratch, but only tune up the generated in AI, copywriters do the same with machine text.

Just yesterday, a Midjourney-created photo of the Pope in a down jacket went viral online. The only reason the photo got so viral was because it was so hard for the average person to understand that it was a fake, so many people believed it.

And that’s just a fraction of the mass of cases of how graphical AI is embedded in our everyday lives.

Are we straining?

We are. And in our products we try in every possible way to use artificial intelligence to keep up. Using the example of one of our products 24AI, let’s try to outline the state of product AI photography today and what to expect tomorrow.

AI tools can create many better product visuals than you think

Many of the images generated in 24AI are virtually indistinguishable from professional photographs, and in some cases they are even better. Generating graphics in AI is not just a matter of cutting out an item and pasting it onto a background. In the process, the neural network selects light, depth of field, surface texture, time of day, reflection, reflexes (yes, yes, reflexes) depending on the material, and that’s not all.

There are already quite a few graphical neural networks on the market, but the colossus in this field is, of course, Midjourney. There custom images tend to deviate from the original, the neural network generalizes the result, whereas in 24AI the product is the basis, and it’s unchangeable. Here, only the environment is created to emphasize the benefits of the product and present it beautifully to the satiated customer.

Specialized highly specialized graphic neural nets like 24AI for eCommerce add appropriate shadows, reflections, reflexes, caustics to the object being integrated, so that the product blends in realistically with the background.

They can create conditions that are expensive or difficult to implement in classical ways.

They can even make pictures with people and animals in the background, and it doesn’t affect the estimate. You pay for a subscription, you can do whatever you want, as much as you want.

All you need to get started is one photo of your product and a short text description of the environment in which you see your product in the final picture.

There are also neural nets where you can upload multiple photos of your product and create product images from different angles and in different conditions. Some allow you to change the lighting and shadows of the images using AI.

Not all generations succeed, many are far from perfect. At the same time, a great result with traditional tools can be achieved only with the help of gurus. But what is certain is that the quality of AI product photos today is already high enough for most customers, for you and me. Many people can’t tell the difference between the generation and the photo.

Creating product photos with neural networks is the best option for many businesses

Many entrepreneurs are forced to make trade-offs between speed, quality and cost of content due to limited resources.

1. Speed

Neural networks generate images, tons of variations of images in seconds, it takes much longer for humans to do that. Just filming products can take a whole day, if not more.

Learning how to use neural nets takes time, yes. But traditional ways of creating visuals take many, many times longer. Photo shoots can take an entire day, not to mention preparation, collecting props, renting a studio, post-processing, learning Photoshop and 3D will eat up times more time. In general, the speed of creating product images in AI is frantic and doesn’t compare to traditional images.

2. Quality

In photography, in Photoshop or 3D, you can fine-tune everything, control consistency, and control the quality of the final result.

Neural networks, on the other hand, are not advanced enough in this respect, and often you have to generate a lot of unsuitable material in order to achieve a similarity between the idea and the end result. Now even creation of complex queries, which seem to include all the details, does not guarantee that the neural network will give a good result.

But the situation is gradually changing.

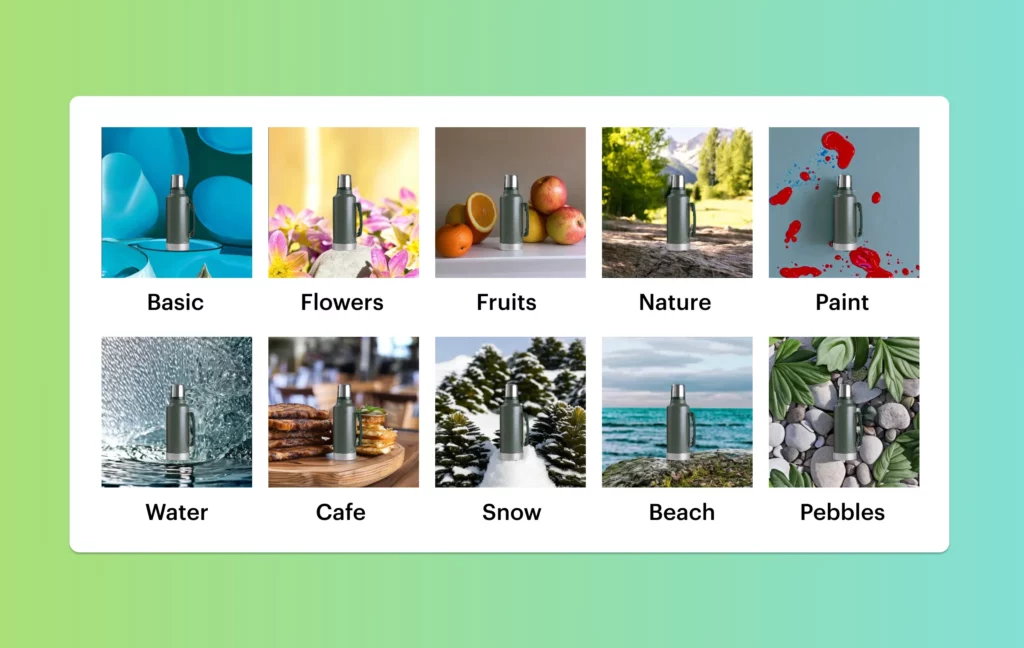

Friendly interfaces appear. In 24AI, we use environment themes (“kitchen”, “outdoors”, “snow”, “cafe”, etc.). Choose a theme and generate an image. It couldn’t be easier.

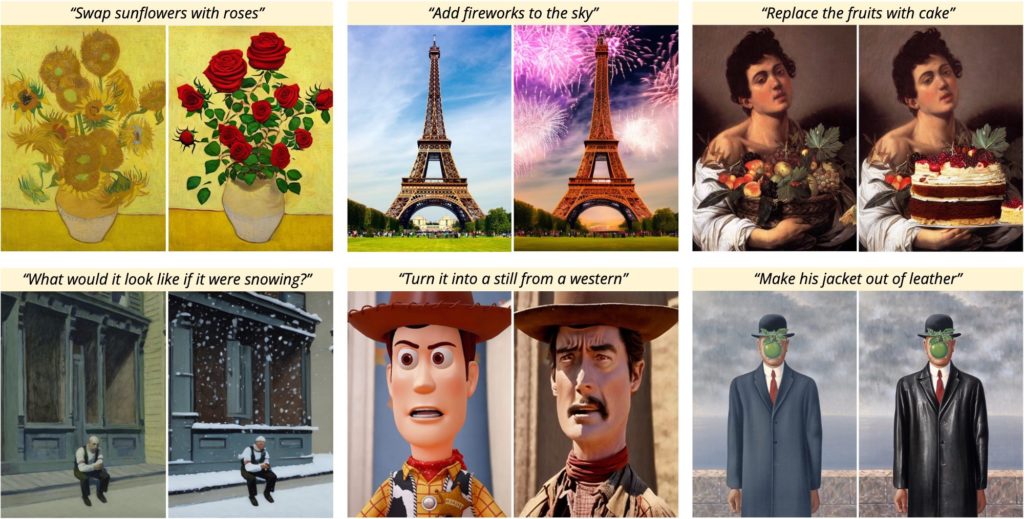

Neural networks can replace parts of the generated image and re-generate something new there until a satisfactory result, keeping everything else untouched. This is called inpainting.

You can edit images with the Prompts to change the background, the clothing material, the character’s style, you can even change the weather. Sometimes it’s scary how fast it all develops. Over time, all these functions will appear in every smartphone. The main message is to start mastering neural networks now and optimize your budget with them.

Create in neural networks

Subject photography isn’t always about brevity; photographers often resort to creative visualization. You need to search for props, coordinate the concept, and assemble the wise boards, and you often can’t do it all by yourself. In this case, too, the AI comes to the rescue. He has a lot of ideas and intelligence will generate them in seconds, you just have time to enter prompts. You can make a painted background, put something in the foreground, put different objects on the surface, put light of a certain color in any part of the space, assign its intensity, and much more.

Create many different images

Creating images simply and quickly, you can generate them in large quantities – this is about neuronics. It would be impractical and too costly to achieve such variability with traditional tools. And with 24AI you can do it in seconds. Need more options? One click and it’s done. If you want different parameters, change the description. Experiment and cool visuals won’t slow you down.

Since generated images are created programmatically, you can technically create hundreds of images for hundreds of products – automatically and all much faster than you would do it forever the old-fashioned way. Save your resources, we’re trying for a reason.

3. Cost

24AI costs from ₽5,000 per month, and you can create an unlimited number of images. By comparison, a photographer’s day in Moscow will cost an average of ₽25,000 (up to 100 photos). 3D will cost even more.

AI photos are already a lot cheaper than traditional images by several orders of magnitude. And they will only get cheaper every year.

Neural nets also have problems

Spoiler alert: most of them will become irrelevant very soon.

Artifacts

Neurons sometimes add strange outgrowths near goods, so-called “artifacts. Here, look:

Unrealistic people and fingers

We can’t explain why neural networks is so far bad at drawing hands, less often eyes and ears. But there are already guys on the market who have already learned how to perfectly depict the whole person, facial and body parts. You will probably hear about them soon. Vivo: the problems of neuronics are just a tribute to the development of technology.

Product size

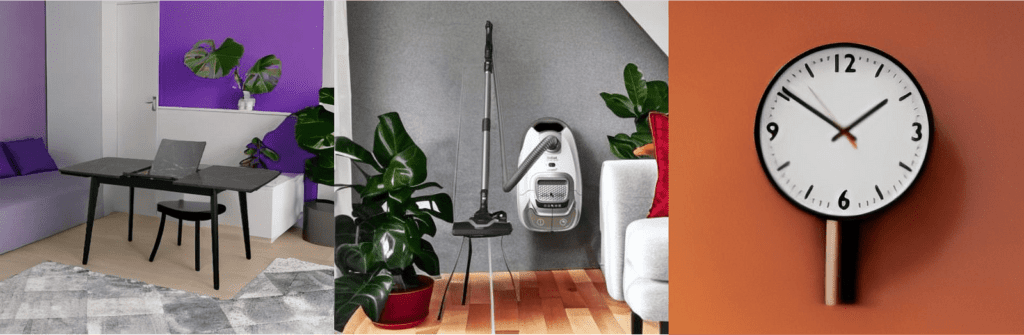

So far, neural networks are not sufficiently aware of the importance of proportions in the final image. So a vacuum cleaner can be the size of a petal. Such examples are rare, but they happen. So now it makes sense to lower your expectations for generation, and be patient.

Text

That’s something, but the text is not very good for anyone. We made the generation of images at once in the size of 2048 by 2048 pixels, so the transformation of the text on the first generation of images is not noticeable, they are practically absent, but if you want to make the adaptation (which is the second generation), the text floats. This is one of the priority tasks that we face in the near future.

The creation of product images by neural networks is actively developing today, but not without difficulties. In 1-2 years, we are sure that these tools will become a part of every eCommerce market and not only eCommerce.

DALL-E and Stable Diffusion, Midjourney, and a ton of other neural networks are launched. Big companies and venture capitalists are investing more and more in AI, which will lead to even faster development.

Soon neural networks will learn how to render text and fine details, put text on final images, turn pictures into videos, and even automatically update images based on click-through rates and conversions. These capabilities already exist, and their use will evolve and spread.

Create your first grocery photos for free!

Оставьте комментарий